-

Writing Tests for Drush Commands

There are plenty of examples of these in the wild, but I figured I’d show a stripped down version of an automated Kernel test that successfully tests a drush command. The trick here is making sure you set up a logger and that you stub a few methods (if you happen to use $this->logger() and dt() in your Drush commands). Also featured in this example is the use of Faker to generate realistic test data.

123456789101112131415161718192021222324252627282930313233343536373839404142434445464748495051525354555657585960616263646566676869707172737475767778798081828384858687888990919293949596979899100101102103104105106107108109110111112113114115116117118119120121122123124125126127128129130131132133134135136137138139140141142143144145146147148149150<?phpdeclare(strict_types = 1);namespace Drupal\mymodule\Tests\Kernel {use Drupal\KernelTests\KernelTestBase;use Drupal\mymodule\Drush\Commands\QueueCommands;use Drush\Log\DrushLoggerManager;use Faker\Factory;use Faker\Generator;use Psr\Log\LoggerInterface;/*** Tests the QueueCommands Drush command file.*/final class QueueTest extends KernelTestBase {/*** {@inheritdoc}*/protected static $modules = [// Enable all the modules that this test relies on.'auto_entitylabel','block','datetime','eck','field','file','image','link','mymodule','mysql','options','path','path_alias','system','text','user','views','views_bulk_operations',];/*** The Faker generator.** @var \Faker\Generator*/protected Generator $faker;/*** The logger.** @var \Drush\Log\DrushLoggerManager|\Psr\Log\LoggerInterface*/protected DrushLoggerManager|LoggerInterface $logger;/*** The Drush queue commands.** @var \Drupal\mymodule\Drush\Commands\QueueCommands*/protected QueueCommands $drushQueueCommands;/*** Set up the test environment.*/protected function setUp(): void {parent::setUp();// Install required config.$this->installConfig(['mymodule']);$this->faker = Factory::create();$logger_class = class_exists(DrushLoggerManager::class) ? DrushLoggerManager::class : LoggerInterface::class;$this->logger = $this->prophesize($logger_class)->reveal();$drushQueueCommands = new QueueCommands();$drushQueueCommands->setLogger($this->logger);}/*** Tests the mymodule:close-all-registration-entities drush command.*/public function testCloseAllRegistrationEntities() {// Create a single registration entity.// Just demoing simple Faker usage for blog post.$registration = \Drupal::entityTypeManager()->getStorage('registration')->create(['type' => 'appt','field_echeckin_link' => $this->faker->url(),'field_epic_id' => $this->faker->randomNumber(9),'field_first_name' => $this->faker->firstName(),'field_has_echeckin_link' => $this->faker->boolean(),'field_outcome' => NULL,'field_phone_number' => $this->faker->phoneNumber(),'field_sequence_is_open' => 1,]);$registration->save();// This method exists in my module's Drush command file.// It sets field_sequence_is_open field to 0 for all open reg entities.// drush mymodule:close-all-registration-entities.$this->drushQueueCommands->closeAllRegistrationEntities();// Assert that the registration entity was updated.$this->assertCount(1, \Drupal::entityTypeManager()->getStorage('registration')->loadByProperties(['field_sequence_is_open' => 0]));}}}namespace {if (!function_exists('dt')) {/*** Stub for dt().** @param string $message* The text.* @param array $replace* The replacement values.** The text.*/function dt($message, array $replace = []): string {return strtr($message, $replace);}}if (!function_exists('drush_op')) {/*** Stub for drush_op.** @param callable $callable* The function to call.*/function drush_op(callable $callable) {$args = func_get_args();array_shift($args);return call_user_func_array($callable, $args);}}}I learned this via the migrate_tools project here.

-

Using Data Providers in PHPUnit Tests

This is the before code, where a single test is run, and each scenario I’m testing could be influenced by the previous scenario (not a good thing, unless that was my goal, which it was not).

123456789101112131415161718192021/*** Tests the getRegistrationsWithinNumDays function.*/public function testGetRegistrationsWithinNumDays() {$firstRegDateTimeStr = '2024-03-18 08:00:00';$this->mhpreregTestHelpers->createRegistration(['field_reg_datetime' => '2024-03-18 09:00:00']);$this->mhpreregTestHelpers->createRegistration(['field_reg_datetime' => '2024-03-19 13:00:00']);$this->mhpreregTestHelpers->createRegistration(['field_reg_datetime' => '2024-03-20 14:20:00']);$this->mhpreregTestHelpers->createRegistration(['field_reg_datetime' => '2024-03-20 17:35:00']);$this->mhpreregTestHelpers->createRegistration(['field_reg_datetime' => '2024-03-21 17:35:00']);$this->mhpreregTestHelpers->createRegistration(['field_reg_datetime' => '2024-03-22 08:00:00']);$registrations = Utils::getIdsOfRegistrationsWithinNumDays(1, $firstRegDateTimeStr);$this->assertEquals(2, count($registrations));$registrations = Utils::getIdsOfRegistrationsWithinNumDays(2, $firstRegDateTimeStr);$this->assertEquals(3, count($registrations));$registrations = Utils::getIdsOfRegistrationsWithinNumDays(3, $firstRegDateTimeStr);$this->assertEquals(5, count($registrations));}This is the after code, where three tests are run. Unfortunately this takes 3x longer to execute. The upside is that each scenario cannot affect the others and it’s perhaps more readable and easier to add additional scenarios.

123456789101112131415161718192021222324252627/*** Data provider for testGetRegistrationsWithinNumDays.*/public function registrationsDataProvider() {return [// Label => [numDays, expectedCount].'1 day' => [1, 2],'2 days' => [2, 3],'3 days' => [3, 5],];}/*** Tests the getRegistrationsWithinNumDays function using a data provider.** @dataProvider registrationsDataProvider*/public function testGetRegistrationsWithinNumDays($numDays, $expectedCount) {$this->mhpreregTestHelpers->createRegistration(['field_reg_datetime' => '2024-03-18 08:00:00']);$this->mhpreregTestHelpers->createRegistration(['field_reg_datetime' => '2024-03-19 09:00:00']);$this->mhpreregTestHelpers->createRegistration(['field_reg_datetime' => '2024-03-20 13:00:00']);$this->mhpreregTestHelpers->createRegistration(['field_reg_datetime' => '2024-03-21 14:20:00']);$this->mhpreregTestHelpers->createRegistration(['field_reg_datetime' => '2024-03-21 17:35:00']);$this->mhpreregTestHelpers->createRegistration(['field_reg_datetime' => '2024-03-22 17:35:00']);$registrations = Utils::getIdsOfRegistrationsWithinNumDays($numDays, '2024-03-18 08:00:00');$this->assertEquals($expectedCount, count($registrations), "Failed for {$numDays} days.");} -

Skip Empty Values During Concatenation in a Drupal 10 Migration

UPDATE. You can do the same as below without the need for a custom module function. (source)

123456789field_address_full:- plugin: callbackcallable: array_filtersource:- address_1- city- state- plugin: concatdelimiter: ', '

This quick example shows one method to ignore empty values in a Drupal 10 migration concatenate process.

Code

Migrate code:

12345678910111213141516process:temp_address_parts:-plugin: skip_on_emptymethod: processsource:- address_1- city- state-plugin: callbackcallable: mymodule_migrate_filter_emptyfield_address_full:plugin: concatdelimiter: ', 'source: '@temp_address_parts'Module code:

12345678/*** Filters out empty values from an array.*/function mymodule_migrate_filter_empty($values): array {return array_filter($values, function ($value) {return !empty($value);});}Result

Example data:

1234address_1,city,state1 Main St.,Portland,ME2 Main St.,Portland,Portland,MEResulting field_address_full plain text value

1231 Main St., Portland, ME1 Main St., PortlandPortland, ME -

Using lnav to View Live DDEV Logs

https://lnav.org/ is a fantastic tool to work with log files. The SQL query functionality is a lot like https://github.com/multiprocessio/dsq, which also impresses.

My quick tip is that lnav can read from stdin. This means you can pipe content into it. Here’s how I monitor my ddev environment in realtime. Hooray for colors!

1ddev logs -f | lnav -qIf you haven’t explored lnav you’ll want to dive into the documentation a bit. It does a lot more than just give pretty colors to your log files / output. https://docs.lnav.org/en/v0.11.2/hotkeys.html is certainly worth bookmarking.

-

Rendering null boolean values with Grid4PHP

I’m a big fan of Grid4PHP for adding a quick CRUD user interface to a MySQL database.

I have a lot of boolean columns, like “is_telehealth” that need to start as NULL until we make a determination about the field’s value. Grid4PHP renders null booleans as “0” in the table/edit form. This is misleading, given it’s not actually a 0 value. If you add the ‘isnull’ attribute to the column it lets you save the null value, but it still renders as a “0”.

Using a custom formatter I was able to render an empty string (nothing) instead of “0” for these null booleans:

1234$boolNullFormatter = "function(cellval,options,rowdata){ return cellval === null ? '' : cellval; }";$cols[] = ['name' => 'is_in_gmb', 'isnull' => true, 'formatter' => $boolNullFormatter];$cols[] = ['name' => 'is_property', 'isnull' => true, 'formatter' => $boolNullFormatter];$cols[] = ['name' => 'is_telehealth', 'isnull' => true, 'formatter' => $boolNullFormatter]; -

Drupal Migrate API skip_on_multiple Process Plugin

Here’s a Migrate API process plugin I wrote in Drupal 9 that skips a property or row when more than one value exists. My use case:

- My source data has locations; each location has multiple associated organizations

- My new Drupal site has locations; each location has a parent organization

- I want to only populate the field_parent_org field (an entity reference) if there is a single organization value in the source data for the location

- I’m using a custom module called “pdms_migrate”

I’ve stripped out all of the noise from the examples below… hopefully it’s enough to help you understand the example:

-

Handling Daylight Saving Time in Cron Jobs

- Our business is located in Maine (same timezone as New York; we set clocks back one hour in the Fall and ahead one hour in the Spring)

- Server A uses ET (automatically adjusts to UTC-4 in the summer, and UTC-5 in the winter)

- Server B uses UTC (not affected by Daylight Saving Time) – we cannot control the timezone of this server

We want to ensure both servers are always referencing, figuratively, the same clock on the wall in Maine.

Here’s what we can do to make sure the timing of cron jobs on Server B (UTC) match the changing times of Server A (ET).

12345# Every day at 11:55pm EST (due to conditional, commands only run during Standard Time (fall/winter)55 4 * * * [ `TZ=America/New_York date +\%Z` = EST ] && php artisan scrub-db >/dev/null 2>&1# Every day at 11:55pm EDT (due to conditional, commands only run during Daylight Saving Time (spring/summer)55 3 * * * [ `TZ=America/New_York date +\%Z` = EDT ] && php artisan scrub-db >/dev/null 2>&1Only one of the php artisan scrub-db commands will execute, depending on the time of year.

-

Using Dataview with Charts in Obsidian

Obsidian is my third most used application after Keyboard Maestro and Alfred. I’ve been using the dataview plugin since I got started with Obsidian. It’s an incredible plugin that gives you the ability to treat your notes like database records. In this example I’ll show how I use dataview to make my projects queryable, and then how I use Obsidian-Charts to make some bar charts of this data.

Using Dataview Variables

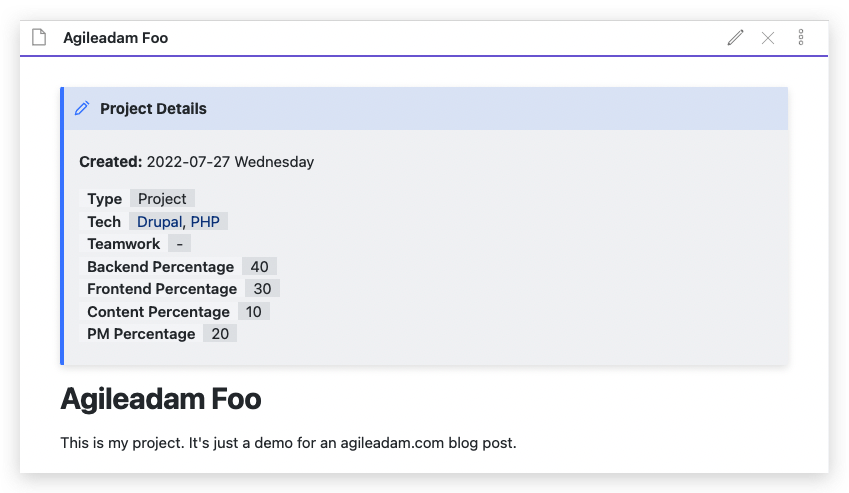

Here’s an example of a Project file in my Obsidian vault:

-

Testing Cookie Modification in Laravel 8

If there’s a better way to pass a cookie from one request to another, in a phpunit feature test in Laravel, please let me know! Here’s one way to handle it:

12345678910111213141516171819202122232425262728293031323334353637383940414243444546<?phpnamespace Tests\Feature;use Illuminate\Cookie\CookieValuePrefix;use Tests\TestCase;class DiveDeeperTest extends TestCase{/*** Tests if the diveDeeper route appends the passed value to the cookie arr.* @return void*/public function test_it_updates_path_cookie(){// Post a deeperPath to the diveDeeper route (with a starter cookie).// This should append the "deeperPath" value to the "path" cookie array.$resp = $this->withCookie('path', serialize(['/']))->post(route('diveDeeper'), ['deeperPath' => 'Users']);// Assert that the cookie has been modified.$resp->assertCookie('path', serialize(['/', 'Users']));// If we make another post request here, it won't include our// modified "path" cookie. We have to retrieve it, then pass it// through to the next request.// Decrypt the cookie (now modified by the diveDeeper route).$cookieValue = CookieValuePrefix::remove(app('encrypter')->decrypt($resp->getCookie('path')->getValue(), false));// Hit the diveDeeper route again using the modified cookie value.$resp = $this->withCookie('path', $cookieValue)->post(route('diveDeeper'), ['deeperPath' => 'adam']);// Assert that the cookie has been modified again.$resp->assertCookie('path', serialize(['/', 'Users', 'adam']));// Etc...}} -

Any Random Saturday Using Faker

Here’s a quick one, folks. I’m using Faker in Laravel factories to generate realistic data. I have a “week end date” field in one of my models that must always be a Saturday. Here’s how I generate a unique random Saturday:

1date('Y-m-d', strtotime('next Saturday ' . $this->faker->unique->date()))